Real-Time Speech-to-Speech Intelligence

ROSE is our groundbreaking 250 billion parameter speech-to-speech model, engineered to redefine human-AI vocal interaction. Built upon the powerful Vision multimodal AI, ROSE brings unparalleled naturalness and linguistic versatility to real-time voice communication, making it indistinguishable from human conversation. With support for over 200 languages, ROSE breaks down communication barriers, enabling seamless and emotionally rich dialogues across the globe.What is ROSE ?

ROSE represents a significant leap in AI-driven vocal technology. Unlike traditional text-to-speech systems that convert text into a synthesized voice, or speech-to-text systems that transcribe spoken words, ROSE operates directly in the auditory domain. It takes spoken input and generates spoken output in real-time, preserving intonation, emotion, and subtle vocal nuances. This allows for fluid, dynamic, and truly human-like conversations, powered by advanced deep learning architectures and our proprietary experiential learning algorithms.Key Features

- Real-Time Speech-to-Speech Conversion: Experience instantaneous voice generation from spoken input, ensuring natural conversational flow.

- Multilingual Support (200+ Languages): Communicate effortlessly across diverse linguistic landscapes, from major global languages to nuanced regional dialects.

- Emotionally Intelligent Vocalization: ROSE captures and conveys a wide spectrum of human emotions, making AI interactions genuinely empathetic and engaging.

- Persona Customization: Define and deploy AI agents with unique vocal personas, tailored for specific roles and interactions (e.g., a cheerful sales agent, a calm customer support bot).

- Seamless Telephony Integration: Designed for robust integration with various telephony providers via WebSocket, facilitating inbound and outbound call automation.

- High Fidelity Audio: Delivers crystal-clear audio output that maintains acoustic quality and natural speech rhythm.

- Built on Vision Multimodal AI: Leverages the advanced capabilities of our Vision AI, integrating sophisticated understanding and generation across modalities.

Why ROSE for Voice AI Agents ?

Traditional voice AI agents often rely on a cascaded system of separate Speech-to-Text (STT) and Text-to-Speech (TTS) models. This multi-step process introduces latency, potential for errors, and a less natural conversational experience. ROSE, as a direct Speech-to-Speech (S2S) model, offers distinct advantages:- Reduced Hallucination: By operating end-to-end from speech input to speech output, ROSE minimizes the intermediate steps where information can be lost or misinterpreted, leading to significantly less hallucination and more coherent responses compared to cascaded systems.

- Unparalleled Human-like Sound: ROSE directly understands and generates the nuances of human speech, including prosody, intonation, and emotional expression. This results in voice AI agents that don’t just speak words, but convey meaning and emotion with a voice that is truly human-like.

- Seamless Telephony Integration via WebSockets: We’ve engineered ROSE with native WebSocket integration, ensuring it can seamlessly connect across various telephony systems. This direct, real-time audio stream capability is crucial for high-performance voice AI agents, eliminating delays and enabling fluid, natural dialogues in customer service, sales, and beyond. This integration ensures your voice AI agents are always ‘on’ and responsive, providing an exceptional conversational experience.

How ROSE Works

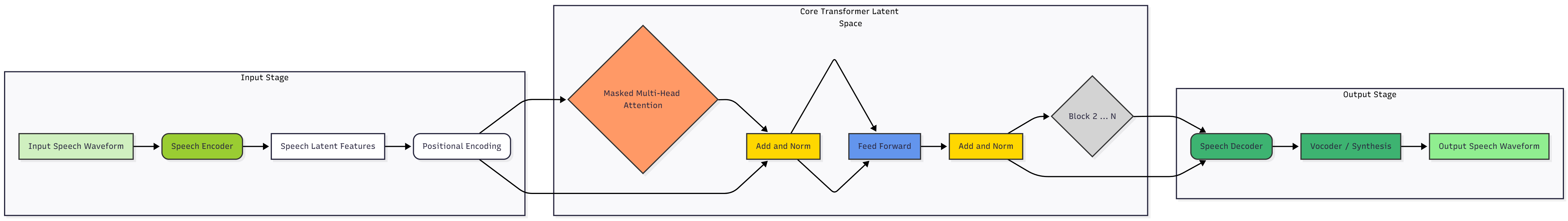

ROSE processes incoming audio streams natively in the acoustic domain, without converting them into text at any stage. The model operates through a high-speed transformer-based pipeline that analyzes, understands, and generates speech directly in its latent representation space.

- Speech Encoding (Input Stage) Incoming waveforms are encoded into high-dimensional speech latent features, capturing both linguistic and paralinguistic information — such as emotion, tone, and speaker intent.

- Contextual Understanding (Transformer Core) These latent features are processed by a multi-layer transformer that models context, semantics, and expressive cues across time. The attention layers allow the model to interpret meaning and emotional tone while maintaining real-time responsiveness.

- Speech Decoding (Output Stage) The processed latent representations are passed to a speech decoder, which reconstructs natural, expressive audio using a neural vocoder or synthesis module.

- Real-Time Interaction Since ROSE never converts speech into text, it achieves ultra-low latency and maintains emotional fidelity — enabling fluid, human-like conversations.

© 2025 AIvoco | ROSE - Real-Time Speech to Speech Intelligence